This week, our team found the answer to our biggest development hurdle- DocumentCloud. Prior to this discovery, we were trying to figure out how to create a relational database, which would store meta tags of our corpus, that would respond to user input in our website’s search form.

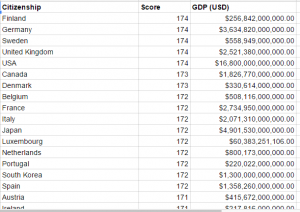

It turns out that DocumentCloud, with an Open Calais backend, is able to create semantic metadata from document uploads and can pull the entities within the text. The ability to recognize entities (places, people, organizations) is particularly helpful for our project since these would be potential search categories. We are also able to create customized search categories through DocumentCloud by creating key value pairs. On Tuesday, we uploaded our 5 HUAC testimonies and started to create key value pairs, which are based on our taxonomy. (Earlier this week, we finalized our taxonomy after receiving feedback on our taxonomy from Professor Schrecker at Yeshiva University and Professor Cuordileone at CUNY City Tech.) In order to create these key value pairs, we had to read through each transcript and pull our answers, like this:

| Field | Notes & Examples | Rand | Brecht | Disney | Reagan | Seeger |

| Hearing Date | year-mo-day, 2015-03-10 | 1947-10-20 | 1947-10-30 | 1947-10-24 | 1947-10-23 | 1955-08-18 |

| Congressional Session number | 80th | 80th | 80th | 80th | 84th | |

| Subject of Hearing | Hollywood | Hollywood | Hollywood | Hollywood | Hollywood | |

| Hearing location | City, 2 letter state | Washington, DC | Washington, DC | Washington, DC | Washington, DC | New York, NY |

| Witness Name | Last Name, First Middle | Rand, Ayn | Brecht, Bertolt | Disney, Walt | Reagan, Ronald W. | Seeger, Pete |

| Witness Occupation | or profession | Author | Playwright | Producer | Actor | Musician |

| Witness Organizational Affiliation | Walt Disney Studios | Screen Actors Guild | People’s Songs | |||

| Type of Witness | Friendly or Unfriendly | Friendly | Unfriendly | Friendly | Friendly | Unfriendly |

| Result of appearance | contempt charge, blacklist, conviction | Blacklist | Contempt charge, but successfully appealed; Blacklist |

With DocumentCloud thrown back into the mix, we had to take a step back and start again with site schematics. We discussed each step of how the user would move through the site, down to the click, and how the backend would work to fulfill the user input in the search form. (Thanks, Amanda!) In terms of development, we will need to create a script (Python or PHP) that will allow the user’s input in the search box to “talk” to the DocumentCloud API and pull the appropriate data.

Amanda mentioned DocumentCloud to us a while ago, but our group thought it was more of a repository than a tool, so our plan was to investigate it later, after we figured out how to build a database. After hounding the Digital Fellows for the past couple of weeks on how to create a relational database, they finally told us, “You need to look at DocumentCloud.” Moral of the story: Question what you think you know.

On the design front, we started working in Bootstrap and have been experimenting with Github. We were able to push a test site through Github pages, but we still need to work on how to upload the rest of our site directory. This is our latest design of the site: