Confirmation of Timining on May 19th

All:

I have confirmed with Matt that DH Praxis will have an hour total to present on May 19th. This breaks down to 12-15 minutes for each group (Amanda and I will do a brief intro and framing, no more than 3-4 minutes, and then you’ll all take it away).

Come to class Tuesday with ideas and questions about your presentations.

Luke

Fashion Index Weekly Update

We have started framing and accelerating our story telling. On the other hand, we have ceased decelerating and building the development. This week, we focus on the narrative part. I also twitted on our Twitter account. Compared to the Instagram account, I haven’t actively used Twitter. I would rather balance up using those two distinct social medias. We got 28 Instagram followers!

We updated the website. We included the essays in the theory section and mentioned Digital HUAC, CUNY Cast, DH TENDEM in the related work’s section. On the theory part, Jojo gave us her statement about our project, Thanks a lot. Renzo and Tessa also included their statements on the theory section. Minn added the statements on “About” Section. If you want to see more update, please click on the link, http://nycfashionindex.com

In technological part, we are cleansing irrelevant data, fixing the image sets, and indexing the pages.

At this stage, we are gathering the literary works from our team members, classmates, and others from fashion studies communities. In addition, we are planning to start shaping the final paper.

Thanks for the capture, CUNY Cast! Actually, this picture was taken during the first presentation on March 17th.

LAUNCH PRESS RELEASE

Hey all-

Please take a moment to contribute to the press release. The link again:

https://github.com/amandabee/CUNY-Digital-Praxis-Seminar/wiki/Press-Release

Once we know what’s going on with the whole event, we can move beyond the team #hashtags portion.

Thanks,

Jojo

TANDEM Project Update 4.26.15

WEEK 12 TANDEM PROJECT UPDATE:

This has been a week of accelerated achievement on all fronts for TANDEM. Thanks to Steve, we have a working MVP hosted on www.dhtandem.com/tandem. Further, we have also made huge strides on the front end with Kelly’s robust initial set of HTML/CSS pages for the site. While the two ends are not tied together just yet, they are within sight as of this weekend. Jojo continues to surprise the group with her intuitive mix of outreach and awesome having sent out personalized invitations to key members in our contact list and people who have shown interest in the past few months. Keep reading for more detailed information about these and other developments.

DEVELOPMENT / DESIGN:

MVP functionality added this week includes:

- Ability to upload multiple files

- Ability to persist data via a sqlite database containing project data and pointers to file locations

- backend analytic code connected to front end

- ability to zip and download results

Remaining tasks are:

- Implement polished UI

- Implement error handling

- Handle session management so that simultaneous users keep their data separate

- Look for opportunities to gain efficiency

- Correct a small bug in the opencv output

- Review security, backup file storage approaches and rework as needed to achieve best practices.

OUTREACH:

Continuing to garner community support, Jojo attended a GC Digital Initiatives event Tuesday as well as the English department’s Friday Forum. Additionally, initial invites for the launch went out to the digital fellows and DH Praxis friends and family via paperless post. Digital Fellow Ex Officio Micki Kaufman has already replied that she wouldn’t miss it. I’m now working to organize outreach with the other teams.

The press release is coming along on the class wiki, too!!

Corpus:

With functionality ironed out, we continue to work with the dataset we have generated via TANDEM for the Mother Goose corpus. As part of our release, we will include work that we have done in both analysis and data visualization for the initial test corpus. If you have questions or points of interest in Mother Goose feel free to comment them below! We are interested in hearing the kinds of questions one might ask of a text/image corpus.

HUAC

As we close in on the final weeks, we’ve come to realize that what we may not be able to write a script that will do all that we want, search-wise. Fortunately, working with DocumentCloud as our database has allowed us to utilize their robust functionality, and we have used their tools to provide basic search and browse functions on our site. With these in place, we’re focused on polishing our front end, pitch, and documentation. We are also considering adding one more layer of fun…

We would like to position this project part as useful tool for historians, part as a template for replicable front-end to DocumentCloud, and as participatory digital scholarship.

To the participatory aspect, we’re considering creating and implementing a crowd sourcing platform to help with assigning the needed metadata to the individual testimonies.

One of the early and lasting story lines behind the project has been making publicly accessible a collection of materials with a shadowy past and curious relationship to public/private spaces, agendas, politics, and notions of guilt. At this stage, scholars would appreciate having the transcripts collected and rendered (simply) searchable; the scattered nature of the testimonies themselves is a major roadblock to HUAC studies that we’re trying to level out. But beyond that, incorporating crowd sourcing would resonate with the true spirit of the Digital HUAC project, which in a sense is the anti HUAC project, by relying on contributions from the public. To include a wide array of contributors in documenting and publicizing material whose origins lie in silencing or coercing folks seems powerful.

We’d love to hear input from you, our classmates, on this potential new addition to the project.

CUNYcast weekly update

CUNYcast has hit the ground running with great work all around.

Outreach & Management:

This week outreach set up an amazing tabling event! We received over 30 signatures from people who are interested in casting! This week Tuesday evening we will be hosting a workshop for interested casters!

Developer:

To get our front-end Calender and show info widgets working, we had to do two things:

Define sourceDomain: The installation guide for the widgets does a poor job of explaining this, but the sourceDomain is the site which you are pulling information from. The tutorials we were using stated that source domain should be your public site address, but no, it actually isn’t. Our public site address is cunycast.net (as in, the site we are sending information to) and the information we’re getting the information from to fill the widgets is airtime.cunycast.net (the proper sourceDomain).

Remove i-frame: Airtime recommends putting its widgets in an iframe, which stands for inline frame. an inline frame allows you to embed another html document on to a page. As such, they let you define and manipulate rules within a specific section of your page. That said, they are finicky and hard to configure, in that not only do you have to figure out the proper dimensions for the frame on the page, but you also need to work between two .html to get it working properly. As such, we took the information from the i-frame.html and just embedded it in the <head> and <body> of our page.

Designer:

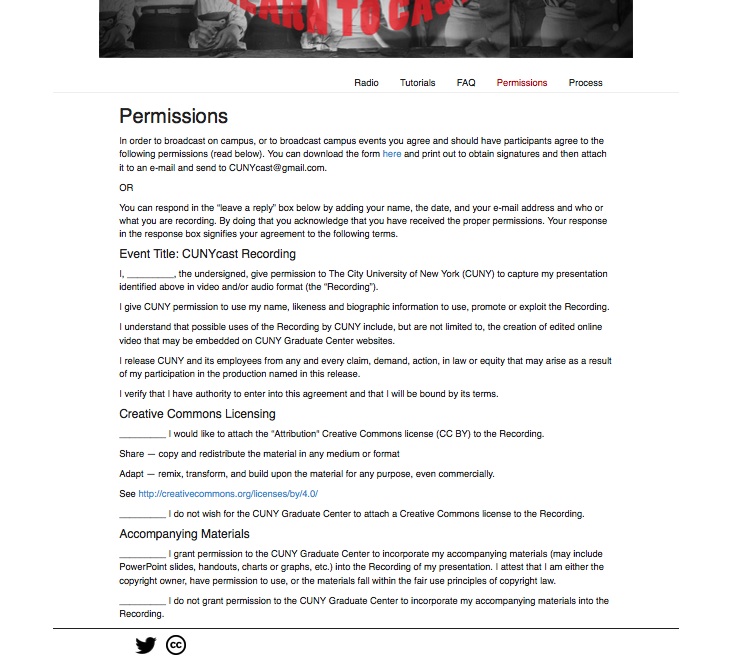

The website has been flushed out we now have 5 pages:

The FAQ list is rounding out a lot of uses for the site and the Process page is going to be a great space for us to manage our longer form tutorials and our project development. This week will formally document our user testing and make the necessary changes to our site. This week we will perform 5 user testing experiments. We will open the website for a subject and ask them to respond. We will then ask them to use the tutorial page specifically, and respond. This should be a great way to finalize the language about our project.

YOU CAN NOT SEE THE CHANGES LIVE YET but… you will be able to see them Monday!

yay!

Stay tuned and I will update this post when the pages go live!

Fashion Index Weekly Update

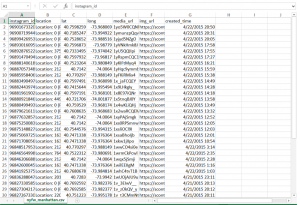

During this semester, we have been working on collecting collection of python and Instagram scripts and interacting with database.

We added the “game” function, which will be an ethos of our website, based on bootstrap. The game refers to the indexing page on the website as well. We believe the index page will show a curatorial power to the users. The game will let the participation. In this respect, our group is aiming to get more interactions. User engagement plays a critical role in this project.

We are planning to tag more and archive more images in order to have deeper historical contents. On the images, we have to attach longitude, latitude, time information, and URLs.

We are thinking about how we can contribute to both fashion studies students and DHers. The way we collect images pulling out from Instagram, crowdsourcing, is a totally community facilitated process. We observe latent people in community and interest. Community building is an integral part because it will generate new types of communication among different users. In the end, we will hit other parts of fashion world e.g.) Paris, London, etc. Also, we try to moderate and curate the data.

We have been discussing the possibilities and prospects of our theme in terms of layer of interaction of fashion. What extend in concern of this field? and we are questioning the power of fashion world.

Team HUAC

This week, team Digital HUAC worked on refining our project narrative. This work dovetails with both outreach and site content: we’ll use narrative material to pitch potential users and partners and beef up our site itself. Juliana developed a thorough “pitch kit” with relevant topics and questions, and in response we filled out sections such as: “Challenges with the Current State of HUAC Records,” and “Our Solution.” We feel that such an approach effectively communicates vital information to all parties. It also helps us think through issues concretely. Nothing forces you to articulate your project means and aims better than thinking about how strangers will interact with it all.

We also demoed a new MVP as a fallback plan. Given that we are gravitating towards fully leveraging Document Cloud’s search interface, we experimented with embedding the DC viewer and search mechanism in our site itself. This is less than ideal: for one thing, this only rendered string-search results that didn’t make use of the robust, standardized metadata that we took time to tag each transcript with. But it was helpful to think about recasting our MVP just in case, and we welcomed the chance to get under the hood of Document Cloud in more detail.

TANDEM Project Update 4.19.15

PROJECT:

DEVELOPMENT:

Steve continues to power away like some sort of half-man, half-robot, mostly magic developer. As of today, we have successfully incorporated a single-file upload functionality to our Django app. Our next action items include:

- Testing the new upload functionality on the server

- Implementing multi-file upload and testing on local hosts and our server

- Hooking up the necessary analytics engine to our Django configuration

- Adding validation and error checking

UI/UX:

With the technical side of the interactivity mapped, we are working on the mockups for the evolution of the front end. We are working through envisioning each step of the process that a user will experience in the TANDEM front end.

We have begun answering:

- What are the users met with as a landing page?

- Are there prompts for users ready to upload their files?

- As the files upload, what kinds of elements will show what is happening in the backend? (Progress bars, spinners, written prompts)

- Once the files are completed, what are the users met with?

- How does a table look with our data fed into it for in-browser?

- What does the download page look like post-processing?

- Where and how are the downloads delivered?

In a short time, we will be able to show in full color and depth each of the above.

Giving life to those mockups will be the capstone for our main body of work pre-presentation.

OUTREACH:

This week’s outreach centered around a number of events:

- Django NYC MeetUp this Wednesday

- Geoff Sechter continues to be a valuable resource, though his opinion seems to be that JQuery is our best bet for uploading multiple files. He’s been super patient helping explicate the particularities of Python, as well.

- Peter Karp of Buzzfeed also had interesting ideas and recommended attending the OpenCV Office Hours hosted at Buzzfeed by Andrew Kelleher, Adam Kelleher and Katarina Kufieta. Their next meetup is April 21 http://www.meetup.com/NYC-OpenCV-Study-Group/events/221727855/.

- Theorizing the Web 2015 at ICP

- While the many fascinating panels on surveillance did not bear directly on TANDEM, several artists spoke and their work involved text image, including Claudia Pederson @cc3pc and Nicholas Knouf @zeitkunst who work on #artforspooks, and Ben Grosser, @bengrosser, who create #scaremail. Another interesting talk treated Victorian carte du visite as early social media.

- Spoke more with Erin Glass about potential publicity for TANDEM

- The Verge NYC after party @Thoughtworks

- On Tessa’s invitation, Jojo attended the closing party for the workshop week for innovative design

- Met John Bruce, Assistant Professor of Strategic Design & Management at the New School, who seemed interested in DH overlap

- Ran into Hannah Lane who does UX at Thoughtworks — a contact point should things get thorny moving forward with TANDEM UI/UX