The Team:

Min: Designer

Tessa: Developer

Renzo: Outreach

You Gene: Project Manager

Abstract

In this project we will fundamentally navigate what people wear in their daily life for the sake of function and beauty, and how they like to show it off to the world through the internet. Fashion has a long history, but today fashion due to the rise of the Internet and SNS (Social Network Services) fashion now has a chronological narrative. In particular, instagram provides an enormous amount of images as people post their daily outfits. The collection of images can be indexed and achieved. As a result, it becomes a fashion trend with a wide range of colors, patterns, and styles.

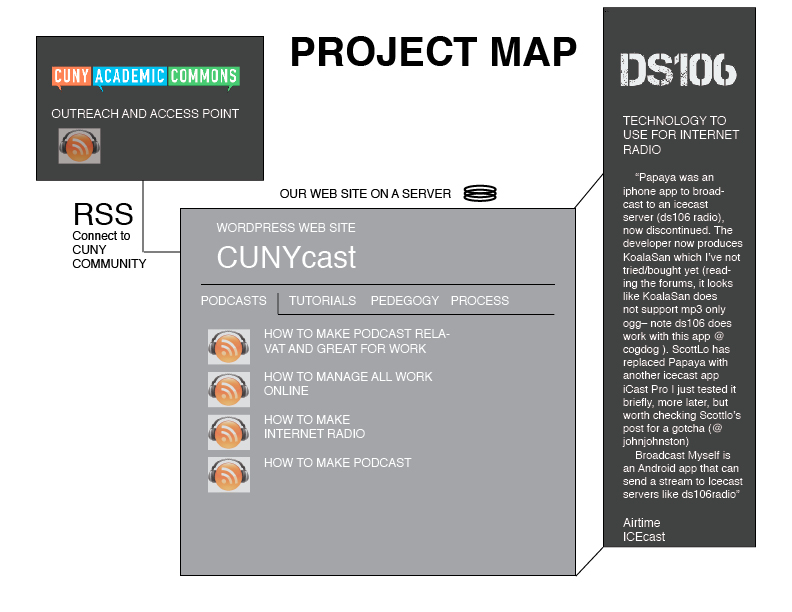

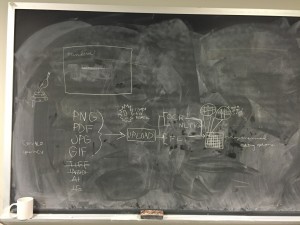

Our methodology is creating a visual timeline of fashion trends in New York based on harvesting the keywords and geospatial data from instagram. On instagram, we will focus on collecting and analyzing hashtags taken in New York, specifically one hashtag, “sprezzatura.” To do data mining, we will mainly utilize API, which provides statistics and help analyzing the trend and popularity. We also want to emphasize a user friendly aspect to the design of the final project with the possibility that viewers will come to the site and see a wall of photos and clicking on one would bring up information and statistics linking it to other photos.

The main audiences for this project are people who belong to fashion communities, including designers, fashion journalists, and those interested in fashion studies and trends. Yet, there is no social index of fashion images. We pursue our goal that this project will lead to create a new social index for fashion. We also aim to create a lively updated visualization datasets what we have collected. In addition, our goal is to define and investigate future fashion trends by looking back our fashion chronology. We expect this project will bring fashion into the dialogue of digital humanities and open up interdisciplinary possibilities between digital humanities and fashion studies.

Keywords: fashion, instagram, data mining, API, social index, sprezzatura, fashion trend, visual timeline

Environmental Scan:

Fashion acts as a barometer of popular tastes, social trends, and socio-economic climate of a place and time. Significantly, fashion schools in New York including MAD, Pratt and Parsons New School reflect scholarly approaches in fashion studies. Social Network Services, especially Instagram, Twitter, and Snapchat capture the now and at the best of time it can represent a momentary unified cultural experience, or at worst, fleeting moment to moment frivolities demanding your attention.

As of December in 2014, Instagram reported there were over 300 million unique users uploading more than 70 million photos and videos every day. The New York Times deemed this phenomenon as “fashion in the age of Instagram.” A new era has arrived where digital media is changing not only the way clothes are presented, but even the way they are designed. Active users help enriching and indexing databases by interacting with images and videos in real time. For example, if an Instagram user tagged an image with #sprezzatura, this image would function as the index on the site. Then, he or she would search the image and check if it matched with one’s own standard. If three users negate the image, the images would be discarded from the historical timeline. Unfortunately, these types of applications are not designed to an archival study in mind, so all the pictures taken can be outdated and lost. Although new digital tools offer novel access to capture and share images, they do not include the voices of individuals or trends outside the mainstream fashion world.

Recently, a proliferation of fashion forums as known as thefashionspot.com and lookbook.nu allow to share the snapshots and comments on current fashion trends. Although these sites host a wealth of fashion community images, they are often niche curated by fashion experts. Fashion has developed as a history told by the victors, meaning the designers and brands that endure financially and the celebrities they dress, while leaving small designers out of the narrative. There is no fashion social index on fashion images. Thus, we pursue a big data-drive look for fashion timelines which has not been operated by fashion experts. Instead, we will aggregate the imageries which help produce and discover new types of fashion languages. Franco Moretti discussed this aggregation approaches in his book Graphs, Maps, Trees that “the lost 99 percent of the archive and reintegrating it into the fabric of literary history.” In this context, we will bring the 99% of the forgotten fashion archives and replace it into the dialogue. This project will lay the groundwork for how we can capture snapshots in fashion at a specific time and place.

What problem does this solve?

This project provides a more permanent snapshot of rapidly changing fashion trends by pulling them from sources that are not designed with archiving in mind (Instagram). The internet has this simultaneous problem of hanging around forever while also being here today, gone TODAY.

What lacuna does it fill?

In cities like New York there is a constant demand for data on fashion tastes and industry.

By Aggregating scattered sources of data and crowdsourcing we will create a more unified view of fashion in New York in a way that was previously unfeasible due to a lack of archiving.

Issues of broken images and credibilities of data.

What similar projects are there?

There are ongoing projects to digitize fashion records, namely the Vogue Archive, The Women’s Wear Daily Archive, and the Council of Fashion Designers of America Archive, but each of these are institution-specific. The Bloomsbury Fashion Photography Archive, part of the Berg Fashion Library, is in the process of archiving over 600,000 images indexed by expert scholars, but these images are mainly comprised of runway photos from the Niall McInerney Fashion Photography Archive. There has been a proliferation of fashion forums in recent years, such as the thefashionspot.com and lookbook.nu, where fashion enthusiasts gather to share photos of themselves and comment on current fashion.

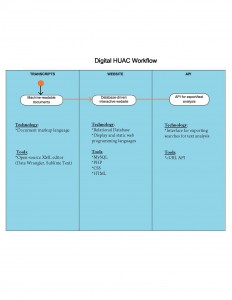

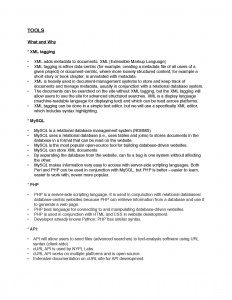

What technologies will be used?

Instagram API- data mining- digging instagram, spatial analysis, some basic website building tools like WordPress. Integrating analytics data into visualization.

Which of these are known?

Data, layer verification, indexing, database, tags, the NYPL picture collection

Which need to be learned?

We need to learn how to yank the API from Instagram through Apigee, better understanding of analytics integration and UX design.

How will the project be managed?

Documents and communication will be retrained on Google drive, but we’re also considering looking at the Graduate Center Redmine.

Milestones

Week 1: Gathering the team, organizing roles, defining tasks

Week 2: Website mockup draft and project text and branding drafts

Week 3: Website live with project description and placeholder for indexing interface

Week 4: Social media accounts live, project promotion begins

Week 5: Instagram API loads into indexing interface

Week 6: Users can interact with indexing interface to tag and validate images

Week 7 – 10: Ongoing indexing and data cleaning

Week 11: Initial visualizations prepared

Week 12: Final visualizations uploaded to website